In our previous post, we made an introduction to emotion recognition to celebrate the release of the publication of our automatic Emotion Recognition pack. We talked about how emotions play a prominent role in the individual and social life of people and how they have a great impact on their behavior and judgements.

We also saw how thanks to Natural Language Processing we can extract the underlying emotions expressed in a text in a fast and simple way and we saw how useful it can be in multiple scenarios.

In this post, we are going to explain in depth how to get the most out of our automatic emotion recognition pack. We will talk about the criteria and considerations we’ve followed in our approach.

Plutchik’s wheel of Emotions

In our first post, we saw different ways of classifying emotions according different studies. At MeaningCloud, we chose to rely on one of these referents in order to build a solid taxonomy. Our Emotion Recognition pack is based on Robert Plutchik’s Wheel of Emotions because of its clarity and potential.

As we already introduced, Plutchik proposed that there are eight primary emotions: joy, sad, disgust, trust, anger, fear, surprise and anticipation. The rest of emotions are combinations of these primary emotions to broaden the range of experiences.

These are some of the bases that help us understand better the wheel (represented by colors, layers and relations):

- Opposites: each polarity has its polar opposite. joy vs sad, trust vs disgust…

- Combinations: the adding up of these primary emotions generates new ones as: love (joy + trust), aggressiveness (anticipation + anger) and so on…

- Intensity: emotion goes from very strong to very weak: trust goes from acceptance to admiration, anger goes from annoyance to fury

Criteria

It bears repeating the fact that emotion recognition is a very subjective task, so, it is essential to specify the considerations we have taken into account. This starts by answering the following question: does the text actually express an emotion explicitly or is it simply stating a fact?

Let’s take the following sentence as an example:

I am playing video games

The text itself is devoid of any emotion, but one could argue that the fact that they are playing video games implies that it’s something they enjoy and thus, it could be associated to the emotion Joy. Trying to extract this kind of emotion reading is a very murky task, as the emotion can come from the subject/reader/writer of the text rather from the text itself.

This determines the first criteria for our Emotion Recognition model: we detect the emotions expressed in a text, not the ones that could be inferred from it.

| Without Emotion | With Emotion |

|---|---|

| I’m playing video games | I enjoy video games |

| He died yesterday | What a pity! He is dead 😞 |

| The price is $5 | It is beautiful. How much is it? |

| Good morning. How are you? | How are you? I am excited with her visit! |

So, what does a text that expresses emotions look like? And in that case, to what emotion/emotions in Plutchik’s wheel of emotions would it correspond to? These two questions give us another characteristic for our model: we can experience more than one emotion at the same time, so our model is going to be multilabel.

For example:

In love with the product, I expect to get it soon

This text will be categorized as Joy, Trust and Anticipation. According to Plutchik, joy and trust can be combined creating love. So, we can have many more different emotions playing together.

To sum it up, we identify one or more emotions that are explicitly expressed and discarded what is merely objective information.

Approaches to Automatic Emotion Recognition

Now that we know some of the basic characteristics our model will have, let’s see how we can approach its implementation.

There are three main approaches for Emotion Recognition:

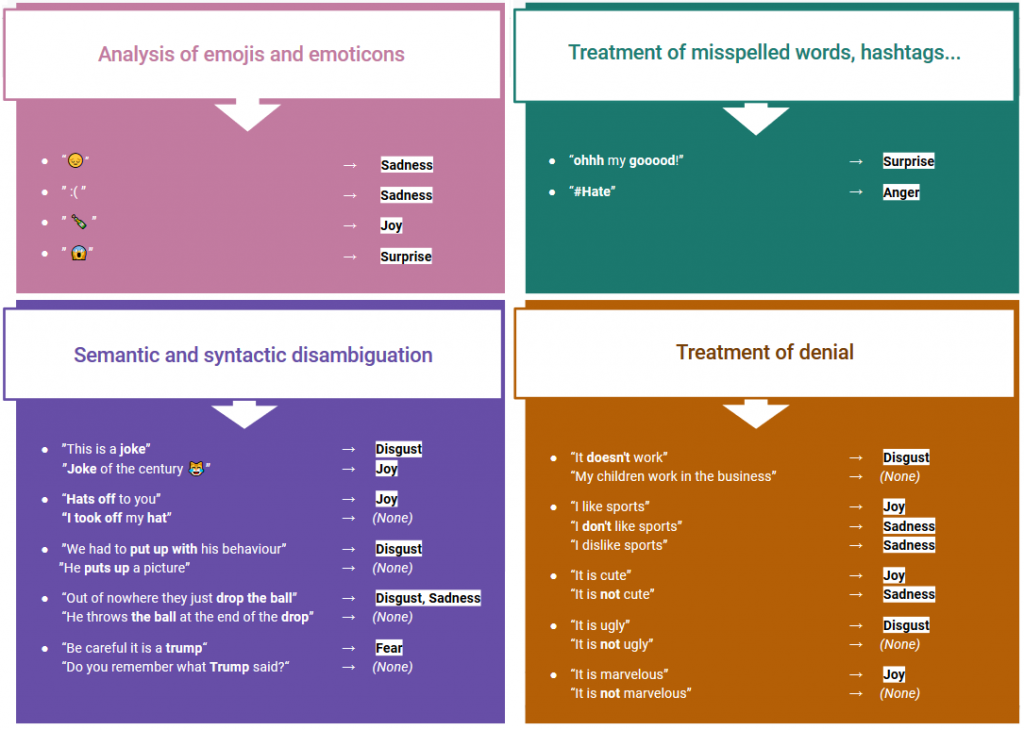

- Semantic approaches, which often use keywords and take advantage of the morphological and semantic aspects of the texts, as well as grammatical aspects such as the treatment of negation

- Learning-based methods, which try to detect emotions based on a previously trained classifier, applying different machine learning algorithms.

- Hybrid approach, which combines semantic and learning-based methods

At MeaningCloud, we use our DeepCategorization API, which is based on powerful morphosyntactic and semantic analysis to create our own emotion recognition model according to the defined criteria. In other words, we use a semantic approach.

These are some of the key differentiators:

Why use MeaningCloud

- Multi-language: Available in English and Spanish.

- Valuable insights: Use of a standard well-known taxonomy.

- Accessible and easy to use: Integrate it into your applications and embed it into your workflow through our standard APIs, as well as our add-in for Excel and Google Sheets.

- Justification of the result of the categorization and possibility of continuous improvement.

- Combine it with other MeaningCloud features and products to gain a deep understanding of your users, customers, employees and other stakeholders.

There are two possible ways to test the Emotion Recognition functionality in MeaningCloud:

- Requesting the 30 day free trial period that we offer for all our packs.

- Subscribing to the pack you are interested in.

For any questions, we are available at support@meaningcloud.com.