According to IBM, “Cognitive Computing systems learn and interact naturally with people to extend what either humans or machines could do on their own. They help human experts make better decisions by penetrating the complexity of Big Data.” Dharmendra Modha, Manager of Cognitive Computing at IBM Research, talks about cognitive computing as an algorithm being able to solve a vast array of problems.

With this definition in mind, it seems that this algorithm requires a way to interact with humans in order to learn and to think as they do. Nice, great words! Anyway, it is the same well-known goal of Artificial Intelligence (AI), a more common name that almost everybody has heard about. Why change it? Ok, when a company is investing at least $1 billion in something, it must be cool and fancy enough to draw people’s attention, and AI is quite old-fashioned. Nevertheless, machines still cannot think! And I believe it will take some time.

How does Cognitive Computing work? According to the given definition, to enable the human-machine interaction, some kind of voice and image processing solutions must be integrated. I am not an expert on image processing, but voice recognition systems, dialog management models and Natu ral Language Processing techniques have been studied for a while. Even Question Answering methods (i.e. the ability of a software system to return the exact answer to a question instead of a set of documents as traditional search engines do) have been deeply studied. We ourselves have been doing (and still do) research on this topic since 2007, which resulted in the development of virtual assistants, a combination of dialogue management and question answering techniques. Do you remember Ikea’s example called Anna? In spite of the fame she gained at that time, she is not working anymore. Perhaps, for users, that kind of interaction through a website was not effective enough. On the other hand, virtual assistants like Siri, supported by an enormous company as Apple, are gaining attention. There are other virtual assistants for environments different from iOS but they are far less known, perhaps because the companies behind them are quite smaller than Apple.

ral Language Processing techniques have been studied for a while. Even Question Answering methods (i.e. the ability of a software system to return the exact answer to a question instead of a set of documents as traditional search engines do) have been deeply studied. We ourselves have been doing (and still do) research on this topic since 2007, which resulted in the development of virtual assistants, a combination of dialogue management and question answering techniques. Do you remember Ikea’s example called Anna? In spite of the fame she gained at that time, she is not working anymore. Perhaps, for users, that kind of interaction through a website was not effective enough. On the other hand, virtual assistants like Siri, supported by an enormous company as Apple, are gaining attention. There are other virtual assistants for environments different from iOS but they are far less known, perhaps because the companies behind them are quite smaller than Apple.

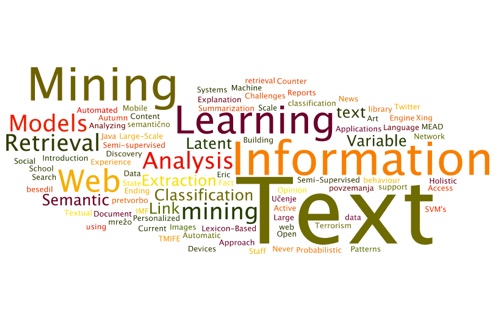

Several aspects of the thinking capabilities required by the mentioned algorithm have to do with the concept of Machine Learning. There are a lot of well-known algorithms which are able to generate models from a set of examples or even from raw data (in the case of unsupervised processes). This enables a machine to learn how to classify things or to group items together, like a baby piling up those coloured geometric pieces. So, combining Machine Learning and NLP models it is possible for a machine to understand a text. This process is what we call Structuring Unstructured Data (much less fancy than Cognitive Computing). That is, making your information actionable. We have been working on this during several years, but now it is called cognitive computing.

So, as you might imagine, Cognitive Computing techniques are not different from the ones we have already developed; a lot of researchers and companies have been combining them. And, if you think about it, does it really matter if a machine thinks or not? The relevant added value of this technology is helping humans to do their job with all the relevant information at hand, at the right moment, so they can make thoughtful and reasonable decisions. This is our goal at MeaningCloud.

ral Language Processing techniques have been studied for a while. Even Question Answering methods (i.e. the ability of a software system to return the exact answer to a question instead of a set of documents as traditional search engines do) have been deeply studied. We ourselves have been doing (and still do) research on this topic

ral Language Processing techniques have been studied for a while. Even Question Answering methods (i.e. the ability of a software system to return the exact answer to a question instead of a set of documents as traditional search engines do) have been deeply studied. We ourselves have been doing (and still do) research on this topic